Instagram to Warn Parents When Teens Search for Self-Harm Content

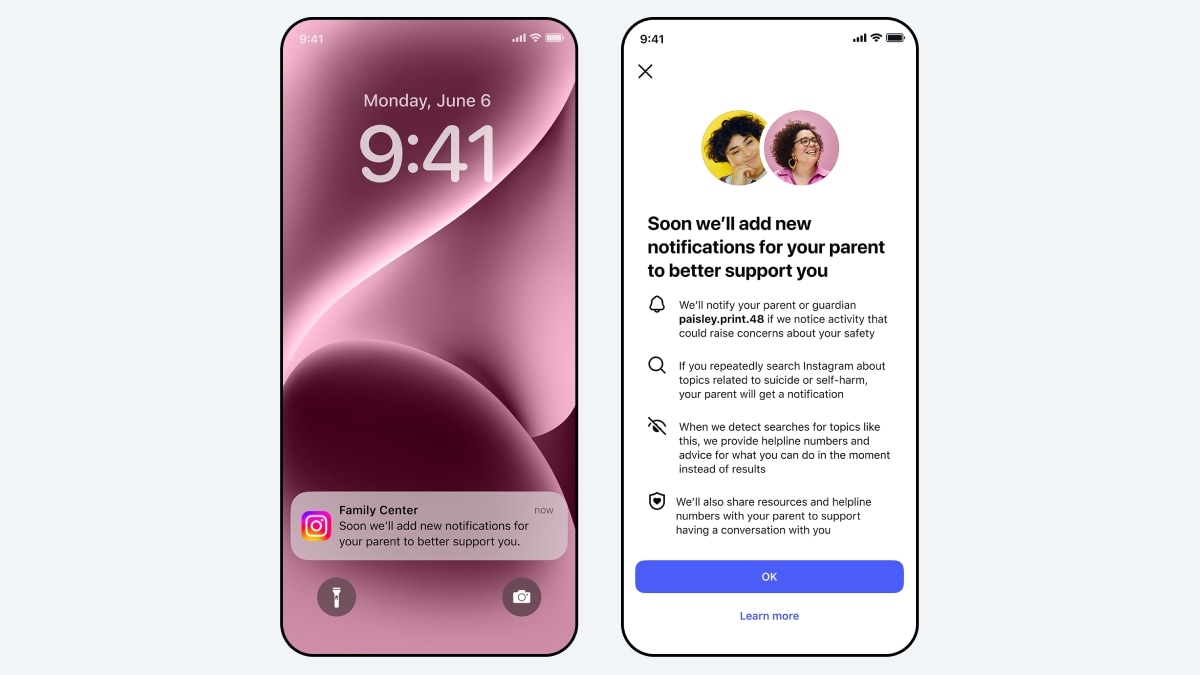

The new parental notification features, which was announced Thursday by Meta on Instagram, are designed to identify potential warning signs in teen search activity. It will begin to alert parents when supervised teens repeatedly try to look up material linked to suicide or self-harm in a short period of time, the company said. It also extends Instagram’s current safety measures and will launch in select English-speaking markets, with a wider release coming after the end of this year. In an increasingly stricter 13+ setting, all users under 18 were placed on Instagram last year and parental approval was required to change the user’s status.

Meta to Notify Parents if Teens Search Self-Harm Content on Instagram

In its announcement, Meta said that Instagram will begin alerting parents if their teen repeatedly searches for terms related to suicide or self-harm within a short period. The company did not quantify the time or the number of searches. The feature will roll out next week in Australia, Canada, the UK, and the US, with other regions to follow later this year.

They will only alert parents who have enabled Instagram’s parental supervision tools to receive these alerts. The parent and the teen connected through supervision will be informed early before the feature goes live. Similarly, when an individual teen repeatedly attempts to look up phrases that promote suicide or self-harm, suggest an intention to hurt themselves (or include related keywords), a notification will be sent to the parent.

alerts will be delivered via email, text message or WhatsApp and in-app notification depending on available contact details, the company added. If the alert is selected, a full-screen message will be displayed to explain how many search attempts are repeated and provides access to expert advice for parents who want to approach the conversation.

In Meta’s words, it already blocks searches “which are clearly linked to suicide or self-harm” and redirects users to support resources and helplines. The company said it analysed search behaviour and consulted members of its Suicide and Self-Harm Advisory Group to determine what threshold is required for several searches before notifying parents. Some alerts may be sent even if there is no immediate threat, it acknowledged, but said that the strategy seeks to balance caution and avoid over-reaction with excessive notifications.

Meta added that it will later this year introduce similar notifications for some teen interactions with its AI tools. If a teen attempts to engage in certain conversations about suicide or self-harm with the company’s AI systems, these alerts will tell parents whether they are trying to contact specific groups of people who want them.

| Helplines | |

| — | — |

| Vandrevala Foundation for Mental Health | 9999666555 or [email protected] |

| TISS iCall | 022-25521111 (Monday-Saturday: 8 am to 10 pm) |

| (If you need support or know someone who does, please reach out to your nearest mental health specialist.) | |

Thanks for reading Instagram to Warn Parents When Teens Search for Self-Harm Content